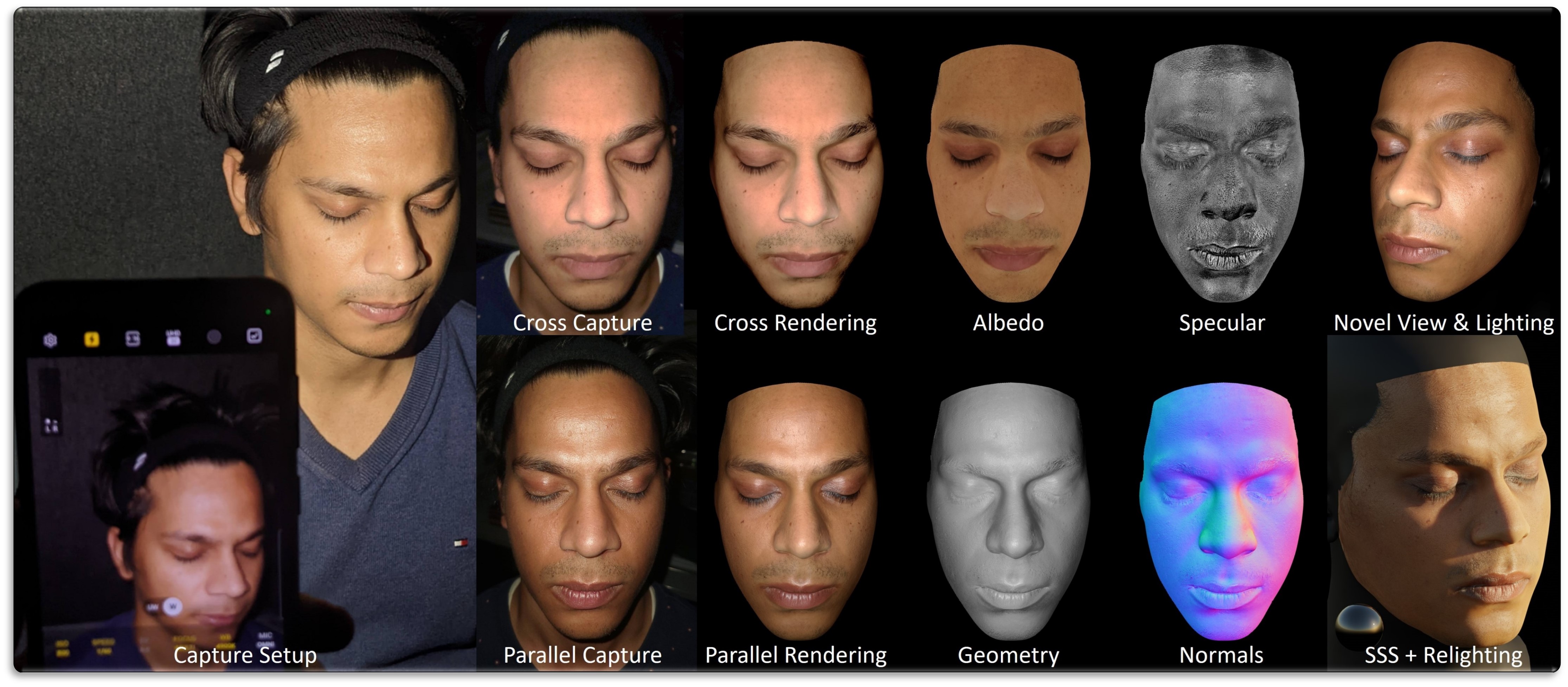

We propose a novel method for high-quality facial texture reconstruction from RGB images using a novel capturing routine based on a single smartphone which we equip with an inexpensive polarization foil. Specifically, we turn the flashlight into a polarized light source and add a polarization filter on top of the camera. Leveraging this setup, we capture the face of a subject with cross-polarized and parallel-polarized light. For each subject, we record two short sequences in a dark environment under flash illumination with different light polarization using the modified smartphone. Based on these observations, we reconstruct an explicit surface mesh of the face using structure from motion. We then exploit the camera and light co-location within a differentiable renderer to optimize the facial textures using an analysis-by-synthesis approach. Our method optimizes for high-resolution normal textures, diffuse albedo, and specular albedo using a coarse-to-fine optimization scheme. We show that the optimized textures can be used in a standard rendering pipeline to synthesize high-quality photo-realistic 3D digital humans in novel environments.

We use our reconstructed geometry and textures to render a face from novel views and under novel illumination. The recovered assets are ready to be used in off-the-shelf rendering software, such as Blender, for photo-realistic relighting.

@InProceedings{azinovic2022polface,

author = {Azinovi\'c, Dejan and Maury, Olivier and Hery, Christophe and Nie{\ss}ner, Matthias and Thies, Justus},

title = {High-Res Facial Appearance Capture from Polarized Smartphone Images},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2023}

}